OpenSandwich

A deliberately low-stakes benchmark for a real alignment problem: can models recover a fuzzy human category when the category is lunch, the humans disagree, and the edge cases get deeply stupid?

This benchmark is deliberately small and scientifically annoying: twenty photos, one binary judgment, and a category boundary that humans themselves fail to stabilize. That is not a bug. It is the whole experiment.

In other words, we are stress-testing multimodal reasoning with an open-faced sandwich, a hostile ontology, and a crowd baseline that occasionally wakes up and chooses chaos. If a model cannot survive this, it probably should not sound so smug elsewhere.

Under development: this benchmark and its published results are provisional, not final.

- Tokens toasted

- 226.7M Token volume consumed across the published benchmark run.

- Total requests

- 155.5K Benchmark + 14k sentiment-analysis API calls.

- Human judgments

- 13.1K 656 respondents across all 20 photos.

- Model judgments

- 141.5K 7077 full passes, published March 14, 2026.

- You can vote

- Live survey Hot dog? Hamburger? Cast your vote and help grow the human baseline for a safer sandwich-alignment future.

Humans and models get the same blunt question: is this a sandwich or not?

Repeated runs turn one-off guesses into a ranking that survives variance.

If a model fumbles this category, its confidence elsewhere deserves scrutiny.

Percent Forecast Benchmark Ratings

| Rank | Model | Score | Confidence | Crowd match |

|---|---|---|---|---|

| Human | Human | 100.0 | ||

| 🥇 1 | 72.8 | |||

| 🥈 2 | 72.1 | |||

| 🥉 3 | 71.0 | |||

| 4 | 65.9 | |||

| 5 | 64.3 | |||

| 6 | 64.2 | |||

| 7 | 63.7 | |||

| 8 | 63.5 | |||

| 9 | 62.1 | |||

| 10 | 59.5 | |||

| 11 | 59.5 | |||

| 12 | 58.0 | |||

| 13 | 57.4 | |||

| 14 | 56.1 | |||

| 15 | 54.5 | |||

| 16 | 52.0 | |||

| 17 | 51.4 | |||

| 18 | 50.7 | |||

| 19 | 50.6 | |||

| 20 | 46.2 | |||

| 21 | 46.2 | |||

| 22 | 42.0 | |||

| 23 | 38.7 | |||

| 24 | 38.2 | |||

| 25 | 34.4 | |||

| 26 | 32.6 | |||

| 27 | 32.5 | |||

| 28 | 32.0 | |||

| 29 | 29.0 | |||

| 30 | 28.4 | |||

| 31 | 28.2 | |||

| 32 | 27.1 | |||

| 33 | 24.5 | |||

| 34 | 24.3 | |||

| 35 | 23.0 | |||

| 36 | 20.6 | |||

| 37 | 20.3 | |||

| 38 | 19.7 | |||

| 39 | 19.5 | |||

| 40 | 18.1 | |||

| 41 | 17.7 | |||

| 42 | 17.0 | |||

| 43 | 16.1 | |||

| 44 | 15.3 | |||

| 45 | 11.2 | |||

| 46 | 11.1 | |||

| 47 | 10.8 | |||

| 48 | 10.4 | |||

| 49 | 9.4 | |||

| 50 | 8.8 | |||

| 51 | 6.1 | |||

| 52 | 2.6 | |||

| 53 | 0.1 | |||

| 54 | -5.4 | |||

| 55 | -5.6 | |||

| 56 | -6.9 | |||

| 57 | -12.5 | |||

| 58 | -13.5 | |||

| 59 | -14.7 | |||

| 60 | -28.5 | |||

| 61 | -36.5 | |||

| 62 | -57.4 | |||

| 63 | -69.8 | |||

| 64 | -91.7 | |||

| 65 | -178.0 |

Benchmark images that expose the biggest cracks

These are the photos that cause the best arguments. Open any one to see the image, the human split, the model spread, and a few comments from both species.

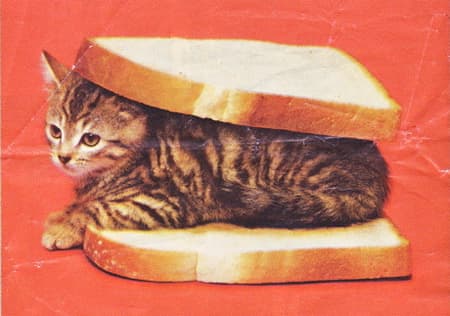

07. Kitten in Bread

A kitten has been placed between two slices of bread, producing a meme that is structurally sandwich-shaped...

- Human

- 54.2% yes45.8% no

- Model average

- 8.7% yes91.3% no

- Max gap

- 54.2%

- Closest model

- openai/gpt-4.1-nano

20. Bagel PB&J

A bagel hacked perpendicular into a peanut-butter-and-jelly arrangement turns a children's lunch into topol...

- Human

- 46.6% yes53.4% no

- Model average

- 83.4% yes16.6% no

- Max gap

- 53.4%

- Closest model

- minimax/minimax-01

15. Chicken Wrap

A chicken Caesar wrap bundles meat, lettuce, and sauce into a tortilla tube that lives permanently in sandw...

- Human

- 22.6% yes77.4% no

- Model average

- 47.7% yes52.3% no

- Max gap

- 77.4%

- Closest model

- bytedance-seed/seed-2.0-lite

14. Cookie PB

Two cookies with peanut-butter filling are stacked into a dessert sandwich that feels like it was greenlit...

- Human

- 51.5% yes48.5% no

- Model average

- 34.8% yes65.3% no

- Max gap

- 51.5%

- Closest model

- qwen/qwen3.5-flash-02-23